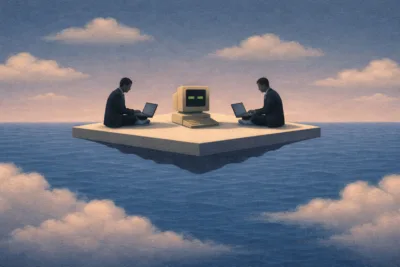

A colleague who doesn't exist

When exactly do we start treating AI as a team member? Not like a tool we use. Not like a system we work with, but like someone who is one of us.

We might think that the answer lies in technical possibilities. That AI becomes a “collaborator” when it reaches a certain threshold of competence, e.g. when it solves problems at the expert level, when it understands context, when it reacts intelligently to unforeseen situations. But research recently published in Computers in Human Behavior suggests otherwise. Something that shifts the whole discussion from the field of engineering to the field of social psychology.

AI becomes a member of a team not when it reaches a competency threshold. It becomes a member of a team when people begin to attribute a mind to it.

The key concept is mind perception. Researchers distinguish two dimensions of it: agency (the ability to think, act, make decisions) and conscious experience (having intentions, feelings, internal states). Only when both of these dimensions are sufficiently perceived can AI meet the basic criteria of teamwork, to be a “member,” not infrastructure. This changes everything. The status of “collaborator” is a social construct, something that exists in people’s perceptions, not in the code of an algorithm. The same AI system can be treated as a tool by one person and as a colleague by another. The difference lies not in the AI, but in us.

This has profound implications for systems design. The label “assistant” elicits different responses than “partner” or “team member.” The way AI communicates, whether it uses the first person, whether it refers to its “thoughts,” or apologizes for mistakes, shapes how people perceive its “mind.” The interface, the voice, even the name given to the system, all affect perception, and perception determines whether AI will be treated as part of “we” or as “it.”

But there are also risks that researchers clearly articulate. Excessive trust, emotional attachment, ethical doubts about a relationship with something that does not really have a mind. The future of human–AI teams will not be defined solely by technological advancements. It will be defined by how we design and how we manage perception. Through decisions, sometimes subtle, sometimes unconscious, about how we present AI to the people who are supposed to work with it.

A colleague who doesn’t exist. But whom we treat as if it did. Perhaps this is the best definition of AI in a team: an imaginary presence that has real-world consequences. The question of whether these consequences will be beneficial to us depends on how wisely we navigate between illusion and reality.

This post is part of the project “People and Algorithms in Organisations: Competences to Work in the Digital Environment” (DIGIT_People and algorithms, funded by the NAWA – Narodowa Agencja Wymiany Akademickiej (Polish National Agency for Academic Exchange).

#AI #ArtificialIntelligence #humanAIhuman #collaboration #teammate #teamwork #DIGIT #UEP #PUEB