When a person discriminates, they leave traces. But what if the algorithm is discriminatory?

The history of Amazon’s recruitment system has already become a canonical example, a warning that should hang in every conference room where decisions are made to implement AI in areas related to people. The company wanted to create a “holy grail of recruitment,” a tool that would automatically select the best candidates. They trained the model on ten years of CV data. And didn’t notice that these ten years were mainly men’s CVs. The model learned that male candidates are “better.” Not because someone told it directly. It learned this on its own, drawing conclusions from patterns in the data. It began to lower the scores of CVs containing the word “women’s” (as in “women’s chess club” or “women’s college”). It favored certain phrases, more common in CVs written by men. It did exactly what the data taught it: it recreated the past. Amazon eventually abandoned this system. But the broader question remains. How many similar systems are currently in operation in thousands of companies, making decisions about employment, loans, access to services? Are we biased?

Researchers distinguish several types of algorithmic bias:

- Historical bias – AI learns from the past and recreates its inequalities.

- Representation bias – Groups that are underrepresented in the data become “less visible” to the model.

- Proxy bias – Seemingly neutral features (words, university names, writing style) become hidden indicators of gender, race, origin.

- Black box bias – Even when we remove explicit biases, the model can reproduce them in a way we don’t understand.

- And finally, automation bias – the tendency of organizations to trust algorithmic results more than human judgments.

The latter is particularly worrying. The algorithm has an aura of objectivity that human decision lacks. When a recruiter says “this candidate doesn’t suit me,” you can ask them why, you can question their judgment. When an algorithm gives a low rating, it’s harder to ask a question. After all, the system “only processes data.” Mathematics is neutral. Right? No. Mathematics is only as neutral as the data it operates on and the goals it has been set. And data is always historical; it is a record of what people did in the past, with all the baggage of prejudice, stereotypes, and inequality. When we tell the algorithm “find patterns of success,” it finds patterns, including the patterns of discrimination that led to that success.

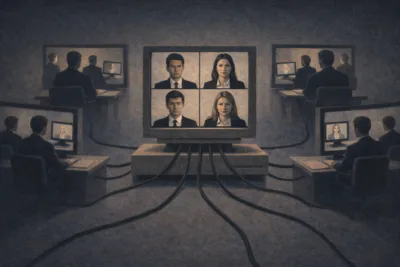

Scale is a key element. A biased recruiter may reject dozens of candidates a year. The algorithm processes millions of applications. Discrimination, which in the hands of a human would be a series of individual injustices, in the hands of AI becomes a systemic barrier: invisible, untouchable, difficult to prove in court. And this leads to a fundamental question that we rarely ask directly: are we ready to manage AI in areas where human fate is at stake? It’s not just about the explainability of models. It’s about recognizing that AI is not a neutral tool; it’s a mirror of our past, with all its shadows. And that by entrusting it with decisions about people, we do not eliminate prejudices. We hand them over to a machine that will execute them faster, quieter, and on a scale that no human could achieve.

This post is part of the project “People and Algorithms in Organisations: Competences to Work in the Digital Environment”, funded by the NAWA – Narodowa Agencja Wymiany Akademickiej (Polish National Agency for Academic Exchange). #AI #ArtificialIntelligence #algorithm #bias #discrimination #DIGIT #UEP #PUEB